The most oppressed constituency in AI safety are the shareholders

Give a voice to the voiceless (the brave capitalists risking their estates in the pursuit of self-interested returns)

In the summer of 1945, J. Robert Oppenheimer watched his work exterminate, by some accounts, at least 200,000 Japanese civilians. It was only on the hydrogen bomb, that Oppenheimer later drew his moral line. The lesson is not that he was wrong to oppose the hydrogen bomb. The lesson is that he had already made the Faustian bargain. Dario Amodei appears to be replaying this in real time.

Anthropic was the first major AI lab to deploy its technology on classified U.S. military networks. Claude was put to work analyzing intelligence, planning operations and conducting cyberwarfare for the Pentagon. In July 2025, Anthropic signed a $200 million contract with the Department of War. Sorry, yes, the Department of WAR.

Satisfied as a customer, the Pentagon asked for more. Specifically, an “all lawful purposes” policy, which could include the freedom to use Claude for autonomous weapons and mass surveillance. Anthropic said no. Amodei had red lines: no autonomous weapons, no mass surveillance of American citizens. The Pentagon did not take this well. But the real oppressed constituency in the whole story, that nobody thinks about, got doubly betrayed: the shareholders.

Prof. Theo Vermaelen at INSEAD has spent years developing an ethical framework of the firm as a contract theory. The core argument is simple: managers operate on delegated authority from shareholders. In practice, shareholders have very few explicit rights. They come last in the waterfall after customers, creditors, often even employees. The implicit contract the manager agrees to with a shareholder is that managers will responsibly look after their interests. When managers deviate to pursue their own moral preferences, they are instead spending shareholder’s money purely on their own moral consumption, and are therefore behaving unethically.

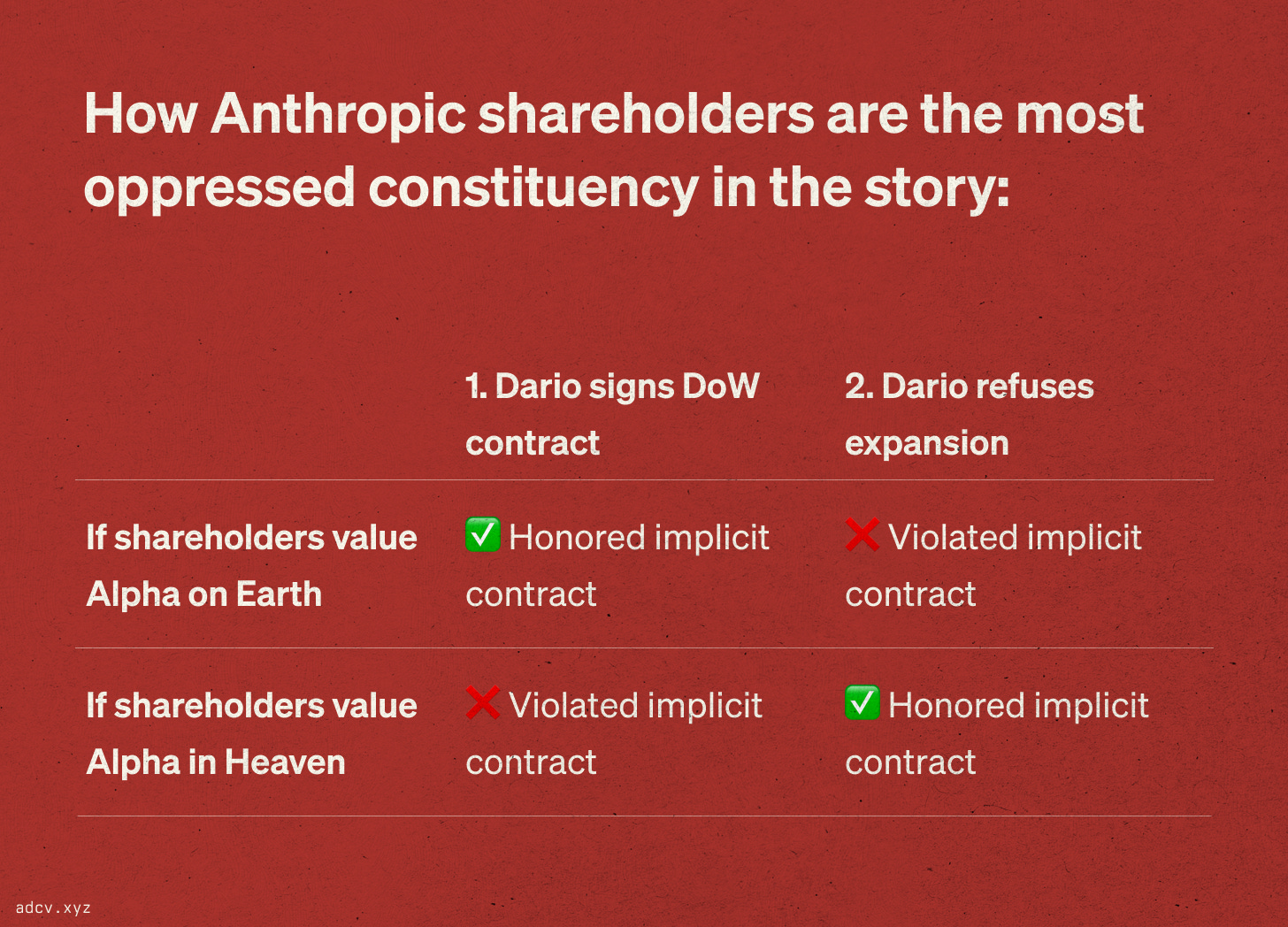

Vermaelen frames this as the tension between “Alpha on Earth” (financial returns) and “Alpha in Heaven” (moral and reputational benefits that accrue to managers but may not translate to shareholder value). When they conflict, honoring the ethical obligation means committing to Alpha on Earth, unless shareholders explicitly opt in to Alpha in Heaven (foregoing Alpha on Earth). You can’t always get what you want, you can’t pursue both.

Consider the two decisions Amodei made.

First, he signed the $200 million contract. Anthropic, a Public Benefit Corporation founded by safety-minded refugees from OpenAI, agreed to provide its most advanced AI system to the world’s most powerful military for use in intelligence, operations planning and cyberwarfare. Whatever scope limitations were negotiated, the relationship was established. The money was real. This was Amodei choosing Alpha on Earth.

Second, he refused to expand the engagement into autonomous weapons and surveillance. This was Alpha in Heaven. The principled stand, the red line, the thing that plays well in press releases and recruiting pitches to idealistic engineers ahead of an expected IPO.

The problem is that these two decisions are mutually exclusive under the implicit contract framework, as they disregard the preferences of the only relevant stakeholders, namely Anthropic’s shareholders.

If shareholders invested expecting financial returns, then signing the contract was correct but refusing to expand was a violation. You already took the Pentagon’s money. You already deployed Claude for cyberwarfare. You chose this client. Now you’re leaving revenue on the table and torpedoing the relationship with the world’s deepest-pocketed buyer because of a personal moral preference about the next incremental use case. The line between “cyberwarfare” and “autonomous weapons” is not a bright line. It is a gradient, and you stepped onto it voluntarily.

If shareholders invested expecting safety, then refusing the expansion was correct but signing the original contract was the betrayal. If investors gave eye-watering valuations because they believed in responsible AI development, then deploying Claude on classified military networks for cyberwarfare was the violation, not the refusal to go further. Amodei could have avoided all this by simply never taking the call.

Either way, the shareholders are the most betrayed group in this whole story, and have had their implicit contracts absolutely destroyed by a CEO torn between money and moral consumption.

Contrast this to Sam Altman’s approach. OpenAI publicly supported Anthropic’s stance on AI safety, then immediately submitted a bid to replace Anthropic’s contract. Not only is this not hypocrisy, this is perfectly ethical behavior from the CEO of OpenAI because his shareholders know what they are getting. The implicit contract is respected. In fact, the board of OpenAI has gone to considerable trouble and expense to clarify precisely this Heaven v Earth trade-off.

Dario, on the other hand, wants to mold the world in his own image. He sells Heaven to his employees and social entourage but sells Earth to his investors and finds he cannot deliver both. The manager who believes his moral judgment should override his shareholders’ capital allocation is not a steward. He is a priest spending the congregation’s tithes on his own preferred charity.

Nobody in this story is thinking about Anthropic’s shareholders. The debate is framed entirely as a contest between national security hawks and safety advocates. These are legitimate positions. But the people who actually put up billions of dollars to fund this company are invisible. If they invested expecting returns, Dario just destroyed a $200 million relationship and invited a government blacklist. If they invested expecting safety, Dario already betrayed them when he signed the contract.

The real test of AI safety is not whether Dario Amodei can draw red lines for the Pentagon. It’s whether anyone thought to ask his shareholders where those lines should be.

I, for one, believe we should give a voice to the voiceless (the brave capitalists risking their estates in the pursuit of self-interested returns).